XTimes

Editor's Note

This week's stories find us in a remarkable moment — one in which humanity is simultaneously reaching outward toward the Moon and inward toward some of the most fundamental questions about power, purpose, and accountability.

Four astronauts are returning from the farthest point humans have ever traveled in space, breaking a record set more than half a century ago. Meanwhile, back on Earth, a courtroom drama is unfolding over who controls the most powerful AI laboratory in the world, a state is positioning itself as the conscience of artificial intelligence regulation, and a company whose products we carry in our pockets is wrestling with the pressures of innovation in a competitive age.

Taken together, these stories remind us that progress is never just a technical achievement. It is always also a human one — filled with ambition, conflict, and the ongoing negotiation between where we are and where we are trying to go.

Top Stories

Artemis II: Humans Venture Farther Into Space Than Ever Before

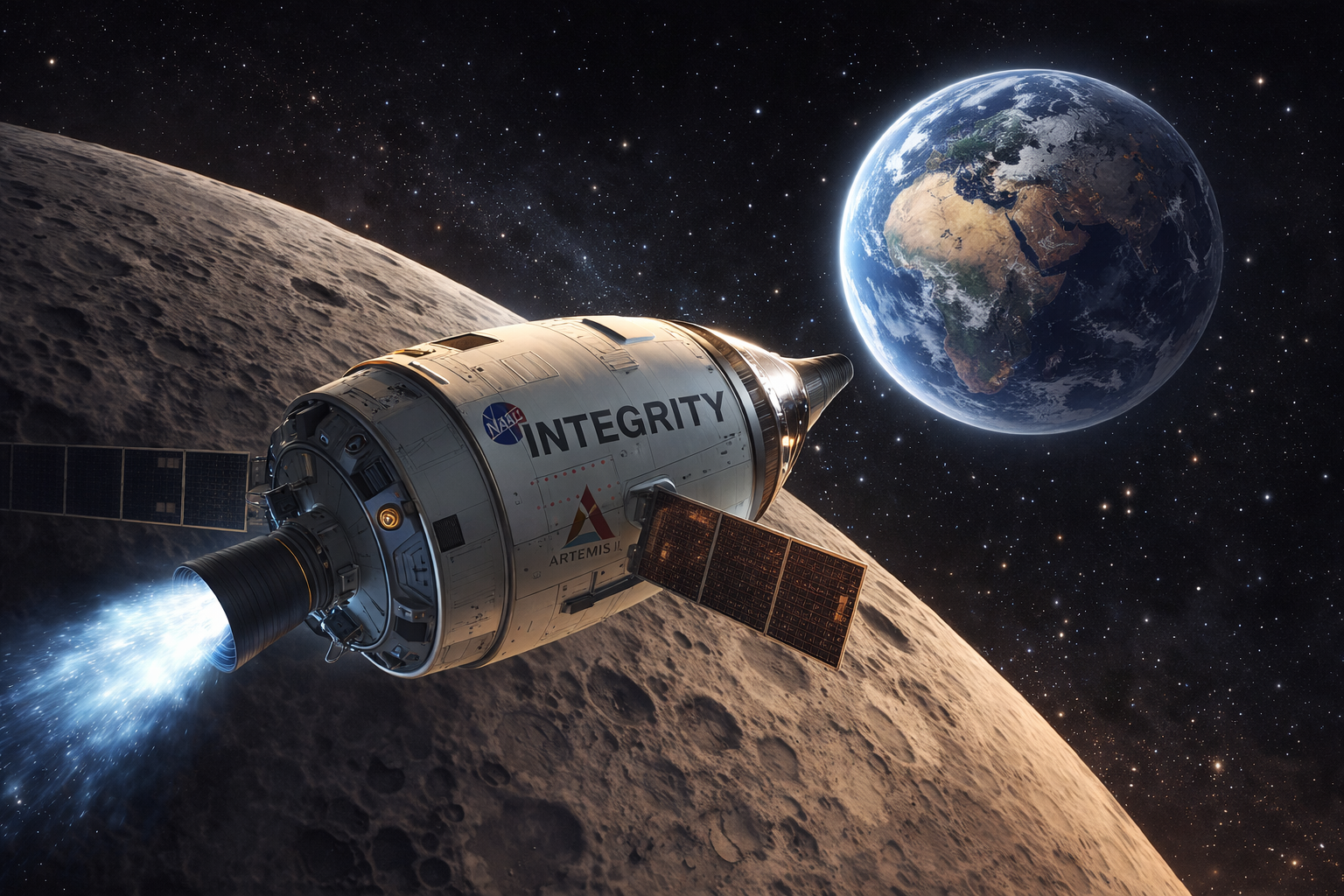

NASA's Artemis II mission is currently in its final days, with four astronauts — Reid Wiseman, Victor Glover, Christina Koch, and Canadian Jeremy Hansen — aboard the Orion spacecraft, named Integrity, now heading home after a historic journey around the Moon.

On April 6, six days into the mission, the crew surpassed the record for the farthest distance any human has ever traveled from Earth (NASA) — reaching a maximum of 252,756 miles, placing them 4,111 miles farther from Earth than the Apollo 13 crew in 1970. (NASA) The milestone came during an extraordinary lunar flyby in which the crew witnessed Earthrise, observed the solar corona during a total solar eclipse visible only from their vantage point in deep space, and studied ancient lunar craters, including the 3.8-billion-year-old Orientale basin.

On April 7, the Orion spacecraft completed its first return correction burn, igniting its thrusters for 15 seconds and beginning the journey back toward Earth. NASA Splashdown is scheduled off the coast of San Diego on the evening of Friday, April 10. NASA

Source: NASA Artemis Blog | NASA Mission Overview

Why it matters: Artemis II is not merely a symbolic return to deep space — it is a critical engineering test of the systems that will carry humans back to the lunar surface and, eventually, to Mars. Every hour of data collected, every system evaluated, and every record broken brings us measurably closer to becoming a multi-world species. This week, four human beings ventured to the edge of where our kind has ever gone. That fact alone deserves our full attention.

Musk vs. Altman: The Battle Over OpenAI's Soul Heads to Trial

The long-running legal and personal conflict between Elon Musk and OpenAI CEO Sam Altman escalated significantly this week, as the case moves toward a jury trial set to begin April 27 in Oakland, California.

In a court filing Tuesday, Musk's lawyers laid out the specific remedies their client is seeking if a judge and jury determine that Altman and OpenAI defrauded him, including an order removing Altman as a director from the nonprofit board and removing both Altman and president Greg Brockman as officers of the for-profit arm. CNBC Musk also asked that any damages awarded — potentially as high as $134 billion — be directed to OpenAI's nonprofit charitable arm rather than to himself. Gizmodo

The lawsuit stems from Musk's claim that OpenAI "assiduously manipulated" him into donating $38 million based on promises that the organization would remain a nonprofit. CNBC OpenAI has pushed back forcefully. OpenAI's strategy chief wrote to the attorneys general of California and Delaware urging them to investigate what he described as "improper and anti-competitive behavior" by Musk, including allegations that Musk coordinated opposition efforts with Meta CEO Mark Zuckerberg. CNBC OpenAI has described the lawsuit as a harassment campaign driven by ego and competitive rivalry.

Why it matters: Beneath the spectacle of two powerful figures in conflict lies a genuinely important question: what obligations do those who create powerful institutions — especially those built around a public mission — carry when those institutions evolve in unexpected directions? Whether Musk's motives are principled or self-interested, the trial will force courts and the public to grapple with questions of accountability, governance, and the ethics of transforming a public good into private profit.

California Takes the Lead on AI Regulation — and Sets a National Standard

While the federal government has largely deferred on regulating artificial intelligence, California has moved decisively to fill the vacuum, enacting a wave of AI laws that are now taking effect and shaping how the technology is developed and deployed nationwide.

Among the most significant measures is SB 53, the Transparency in Frontier AI Act, which requires large AI developers to publicly disclose how they plan to mitigate potentially catastrophic risks, and to report critical safety incidents to the state's Office of Emergency Services. Buchanan Ingersoll & Rooney Other new laws require chatbot platforms to clearly disclose when users are interacting with AI, ban algorithmic price-fixing, and prohibit AI systems from falsely implying they are operated by licensed healthcare professionals. Pillsbury Winthrop Shaw Pittman

Most recently, Governor Gavin Newsom signed an executive order on March 30 directing state agencies to develop certification requirements for companies seeking to provide AI-enabled products or services to California's government Ropes & Gray LLP — effectively using the state's enormous purchasing power as a regulatory lever. Because companies cannot afford to lose access to the world's fourth-largest economy, analysts expect California's rules to function as a de facto national standard, even as the White House pushes for a federal framework that would preempt state-level AI laws. Axios

Source: Axios | Ropes & Gray

Why it matters: Artificial intelligence is advancing far faster than most governments can regulate it. California's assertive approach — combining legislation, executive action, and procurement standards — offers a model for how democracies can begin to shape a technology that, left entirely to market forces, may reshape them. Whether or not you agree with every provision, the state's effort signals a growing recognition that the development of powerful AI is too consequential to remain ungoverned.

Apple's Foldable iPhone Hits Turbulence — and So Does Its Stock

Apple's long-anticipated entry into the foldable smartphone market has run into significant engineering difficulties, rattling investor confidence and raising questions about the company's near-term product roadmap.

According to a report from Nikkei Asia, Apple has encountered setbacks in the engineering test phase of its first foldable iPhone, with issues proving more complex and taking longer to resolve than expected. TheStreet The engineering challenges center on hinge mechanism reliability, display durability through repeated folding cycles, and the integration of components into an unusually thin form factor. TheStreet The device had been expected to launch alongside the iPhone 18 in September 2026 and was projected to cost as much as $2,400.

Apple shares initially fell as much as 5% on the news, though they recovered slightly after Bloomberg later reported that the foldable phone remains on track for its September debut. CNBC The conflicting reports left investors uncertain, and Apple has declined to comment publicly.

Why it matters: Apple's entry into foldables has been one of the most anticipated product launches in the smartphone industry in years. The difficulties the company is encountering are a useful reminder that even the world's most capable engineering organizations struggle with genuinely new hardware categories. More broadly, it illustrates how quickly investor sentiment can shift when the pace of innovation slows — and how much pressure companies face to keep delivering the next transformative product on schedule.

Quick Picks

Researchers Develop AI That Uses 100 Times Less Energy

Researchers at Tufts University's School of Engineering have developed a proof-of-concept AI system that could use up to 100 times less energy than current systems while producing more accurate results. Tufts Now The approach, called neuro-symbolic AI, combines conventional neural networks with symbolic reasoning — the use of rules and logic similar to how humans break problems into steps and categories. Tufts Now

In tests using the Tower of Hanoi puzzle, the neuro-symbolic system achieved a 95% success rate compared to 34% for standard systems, and required only 34 minutes to train versus more than a day and a half for conventional models. Tufts Now AI already accounts for more than 10% of U.S. electricity consumption, a figure expected to double by 2030. ScienceDaily

If this approach can be scaled, it could represent one of the most meaningful developments in making AI both more capable and more sustainable. Source: Tufts University / SciTechDaily

OpenAI Prepares for a Public Offering

OpenAI has surpassed $25 billion in annualized revenue and is reportedly taking early steps toward a public listing, potentially as soon as late 2026. Crescendo AI The news arrives even as the company faces its impending legal battle with Elon Musk, underscoring how rapidly the AI sector is maturing into a major economic force. Rival Anthropic is approaching $19 billion in annualized revenue, making the AI industry one of the fastest-growing sectors in the global technology market. Source: Crescendo AI News

Anthropic's Claude Blocked from Being Designated a National Security Threat

In a significant legal development, a federal judge blocked the U.S. government from designating Anthropic — the maker of Claude — as a "supply chain risk," a label that would have severely restricted federal agencies from using its AI systems. The case arose after Anthropic declined to allow its technology to be used for certain military applications, and Anthropic successfully argued that the government had not adequately justified the designation. The ruling raises important questions about how national security frameworks designed for foreign threats are being applied to domestic AI developers. Source: AP News

The Optimist's Reflection

To the Moon and Back — and What It Means for All of Us

By Todd Eklof

As I write this, four human beings are making their way home from the Moon. They have traveled farther from Earth than any person ever has. They have watched our planet rise over a cratered lunar surface. They have seen the Sun disappear behind the Moon and watched its corona glow in the silence of deep space.

It is easy to let moments like this slip by unnoticed, crowded out by the noise of legal battles, stock prices, and political maneuvering — all of which also appeared in this week's pages. But I want to suggest that the Artemis II mission deserves more than a passing mention, because it represents something the rest of this week's stories also point toward, though in less obvious ways.

We are a species in the process of renegotiating our relationship with our own power.

The Musk-Altman dispute, for all its personal drama, is ultimately a question about who controls the most consequential technology of our time, and whether the promises made when that technology was born still mean anything. California's AI regulations are a society's attempt to assert that some things matter more than market efficiency. Even Apple's engineering struggles remind us that ambition and capability are not always perfectly aligned.

And then there are four people, circling the Moon in a capsule named Integrity, sending back images of a blue world that looks — from a quarter-million miles away — astonishingly small, and astonishingly fragile.

The word "integrity" is not accidental. It means wholeness. It means the alignment between what we say and what we do, between what we build and what we intend. It is the quality we most need — in our institutions, in our technology, and in ourselves — as we extend our reach further than we have ever reached before.

Welcome home, Artemis II. And welcome to the work that awaits us here.

Exponential Times is published weekly by Singularity Sanctuary. To subscribe or learn more, visit singularitysanctuary.com.